Extending XReason for Efficient Formal XAI and Adversarial Detection

Amira Jemaa, Adnan Rashid and Sofiene Tahar

Contact: a_jem@encs.concordia.ca

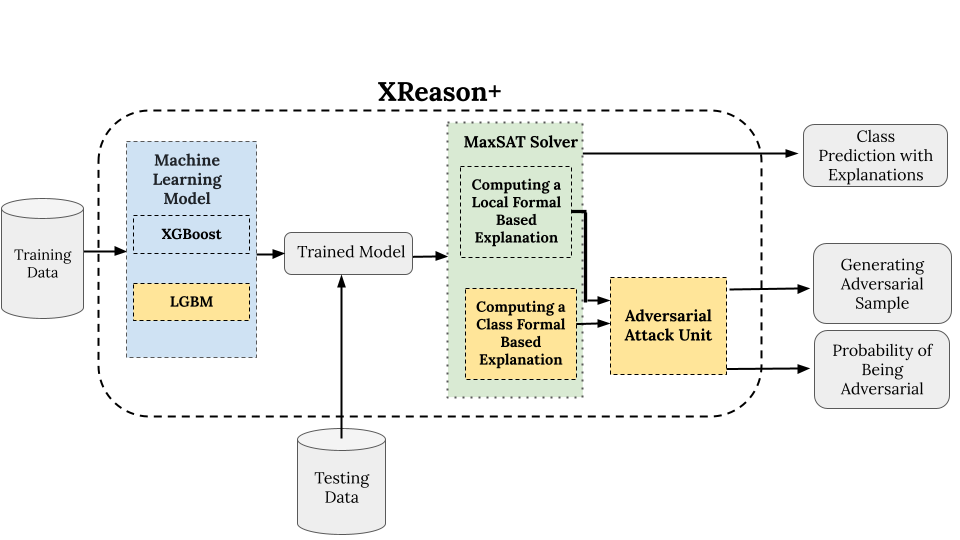

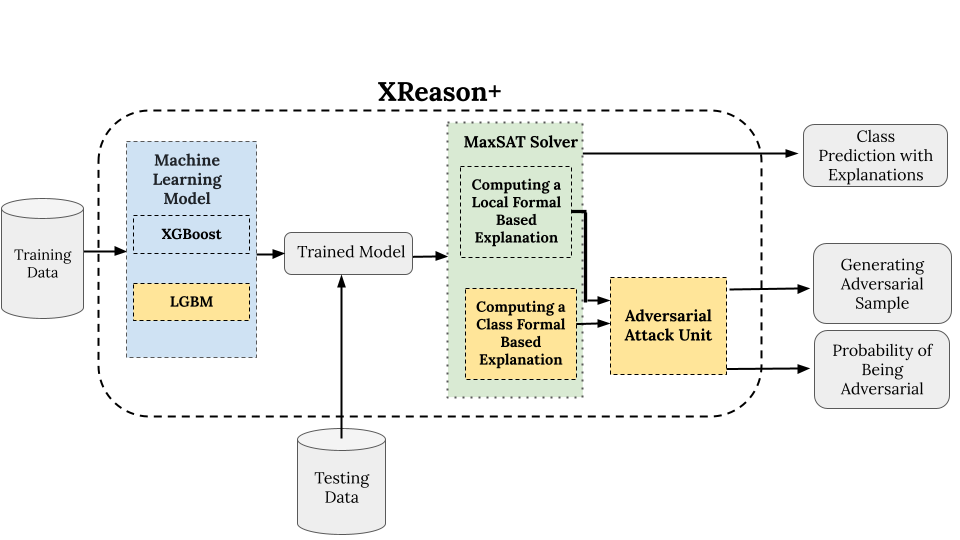

Explainable Artificial Intelligence (XAI) plays an important

role in improving the transparency and reliability of complex machine

learning models, especially in critical domains such as cybersecurity. Despite the prevalence of heuristic interpretation methods such as SHAP

and LIME, these techniques often lack formal guarantees and may produce inconsistent local explanations. To fulfill this need, few tools have

emerged that use formal methods to provide formal explanations. Among

these, XReason uses a SAT solver to generate formal instance-level explanation for XGBoost models. In this paper, we extend the XReason

tool to support LightGBM models as well as class-level explanations.

Additionally, we implement a mechanism to generate and detect adversarial examples in XReason. We evaluate the efficiency and accuracy of

our approach on the CICIDS-2017 dataset, a widely used benchmark for

detecting network attacks.

Amira Jemaa, “Efficient Explainable AI and Adversarial Robustness using Formal Methods“, MASc Thesis, Department of Electrical and Computer Engineering, Concordia University, Montreal, Quebec, Canada, December 2024.

[1] Jemaa, A., Rashid, A., Tahar, S. Extending XReason: Formal Explanations for Adversarial Detection . International Congress on Information and Communication Technology (ICICT), Lecture Notes in Networks and Systems (LNNS), Springer, London, United Kingdom, TO APPEAR (2025).

[2] Jemaa, A., Rashid, A., Tahar, S. Leveraging Formal Methods for Efficient Explainable AI . In Women in Formal Methods Workshop, Conference on Intelligent Computer Mathematics (CICM), Montreal, Canada, August 2024.

Github Repository : Extending XReason for Efficient Formal

XAI and Adversarial Detection

Publications

[1] Jemaa, A., Rashid, A., Tahar, S. Extending XReason: Formal Explanations for Adversarial Detection . International Congress on Information and Communication Technology (ICICT), Lecture Notes in Networks and Systems (LNNS), Springer, London, United Kingdom, TO APPEAR (2025).

[2] Jemaa, A., Rashid, A., Tahar, S. Leveraging Formal Methods for Efficient Explainable AI . In Women in Formal Methods Workshop, Conference on Intelligent Computer Mathematics (CICM), Montreal, Canada, August 2024.